I’m trying to use Undetectable AI’s Humanizer to make AI-written content pass as human for clients, but I’m not sure how reliable or safe it really is. Has anyone tested it at scale against popular AI detectors, and did it affect readability, SEO, or get any penalties or flags? I need real user feedback before I commit to using it in my workflow.

Undetectable AI review, from someone who poked at the free tier way too long

Undetectable AI

I spent a few evenings messing with Undetectable AI using only the free Basic Public model. No upgrade, no credits, nothing fancy. The results were weirdly mixed.

If you only care about fooling detectors, it did better than I expected. Using the ‘More Human’ setting, I saw:

- ZeroGPT scores go down to around 10 percent AI

- GPTZero hovering near 40 percent AI

Those numbers beat a bunch of paid tools I had tested earlier. I cross checked multiple runs, different topics, different lengths. The pattern stayed consistent enough that I stopped assuming it was luck.

The catch is the writing.

On the ‘More Human’ mode, I would give the output something like 5 out of 10 for quality. It constantly shoved first person language into places where it made no sense. Stuff like:

- “I think”

- “In my opinion”

- “From my experience”

Even when the input text was neutral and not personal, the rewrite turned into these weird pseudo-blog posts like someone trying too hard to sound personal for a school assignment.

Other issues I kept seeing:

- Repeated key phrases, almost like soft keyword stuffing

- Sentence fragments that looked unintentional, not stylistic

- Awkward transitions between paragraphs

I tried ‘More Readable’ next. That mode behaved a bit better. Fewer random first person injections, slightly smoother flow. Still not at the level where I would publish it without extra editing. Every sample needed a pass to fix repetition and odd phrasing.

About the paid features, from their public info and what the UI shows:

- Extra models: Stealth and Undetectable

- Five reading levels

- Nine “purpose” presets

- Slider for intensity of the changes

If the free public model already drops ZeroGPT to 10 percent AI, I guess the paid ones go lower or stay more consistent on longer text. I did not pay to verify, so take that as an assumption, not a fact.

Pricing and policy stuff that bothered me a bit:

- Pricing starts at $9.50 per month on annual billing for 20,000 words

- The privacy policy mentions collection of detailed demographic data, including income and education level

- Refunds are tied to a condition, you need to prove your content scored under 75 percent human in an AI detector within 30 days

That last part matters. The marketing leans on a “money-back guarantee”, but in practice you have to:

- Run your text through a detector

- Get a bad human score

- Save proof

- Contact them within 30 days

So it is not a no-questions-asked refund. More like a conditional one.

My take after testing:

- If your priority is lowering AI detector scores and you do not mind editing, the free model already does decent work for that narrow goal.

- If you need clean, publishable text with minimal edits, you will spend time fixing what it spits out.

- If you are privacy sensitive, read their policy closely before signing up: Undetectable AI Humanizer Review with AI-Detection Proof - #2 by Evan - AI Humanizer Reviews - Best AI Humanizer Reviews

I ended up keeping it in my “toolbox” for detection evasion experiments, but I never trusted it to produce finished copy without a careful rewrite by hand.

I run a small content shop and I’ve used Undetectable AI at scale for blog posts and emails for clients who obsess over “AI-free” content.

Short version: it helps with detectors, it does not save you from editing, and it carries some risk.

My setup and volume

• Roughly 150k to 200k words over 2 months

• Long form posts, 1.5k to 3k words each

• Mostly paid tiers, Stealth and Undetectable models

• Mixed sources, GPT‑4, Claude, some human drafts

Detectors I tested

• Originality.ai

• ZeroGPT

• GPTZero

• Writer.com detector

• Copyleaks

• A couple of in‑house smaller models

Results on detection

Your mileage will differ by topic and style, but this is what I saw, averaged across a lot of pieces.

Raw LLM output, no humanizer

• Originality.ai: 80 to 99 percent AI

• GPTZero: flags most long pieces as “likely AI”

• ZeroGPT: 70 to 100 percent AI

After Undetectable AI, “More Human” or Stealth

• Originality.ai: often 20 to 60 percent AI, some under 10 percent

• GPTZero: mixed, sometimes passes, sometimes reports “mixed”

• ZeroGPT: often under 15 percent AI on shorter chunks, long pieces still spike

So I disagree a bit with @mikeappsreviewer on one point. On large articles, I did not see consistently low scores. It helped, but longer text tends to get flagged again, especially by Originality and Copyleaks.

Quality problems

This is where it hurts you with clients.

Issues I saw a lot

• Fake “personal” tone. Same as what Mike said, but even on “More Readable” I still got “I think” sprinkled where it felt off.

• Overuse of transitional phrases. “On the other hand”, “for example”, “in summary” show up way too often.

• Meaning drift. On technical content, some sentences lost precision. For example, security instructions softened or changed slightly. That is a big risk.

• Style mismatch. If your client has a strict voice guide, you will spend time fixing that voice.

For client work, every piece needed a human edit. On average 10 to 25 minutes per 1500 words, mostly to

• Remove fake opinions

• Fix repeated phrases

• Restore technical accuracy

• Align with brand voice

Reliability and safety

Three angles here.

-

Detection risk

Detectors change fast. Stuff that passes today fails tomorrow. I had one old article that passed as “90 percent human” in April and then showed up as “mostly AI” in June on the same tool. If your client writes “guaranteed human” into a contract, this is a risk you own, not the tool. -

Policy risk

Some clients forbid AI rewriting in their contracts. If they ever run forensic checks or use multiple detectors, you will have a problem if you promised “no AI involvement”. Humanizer tools do not remove that risk. -

Privacy

I share some of Mike’s concern, but I’m slightly more paranoid. I avoid sending sensitive drafts, internal docs, legal text, or anything that contains client-specific proprietary terms. For those, I either edit by hand or use a local setup.

If you still want to use Undetectable AI for clients

Here is how I’d treat it.

• Treat it as a helper for first passes on detection, not a magic filter.

• Always keep the original version and the “humanized” one. Sometimes the original reads better and you merge them.

• Test on the same detectors your clients use. If they use Originality.ai, optimize for that, not for ZeroGPT screenshots.

• Do not promise 100 percent human scores in your contracts. Promise quality and accuracy instead.

• On technical or legal topics, compare each paragraph line by line for meaning drift.

Alternative worth a look

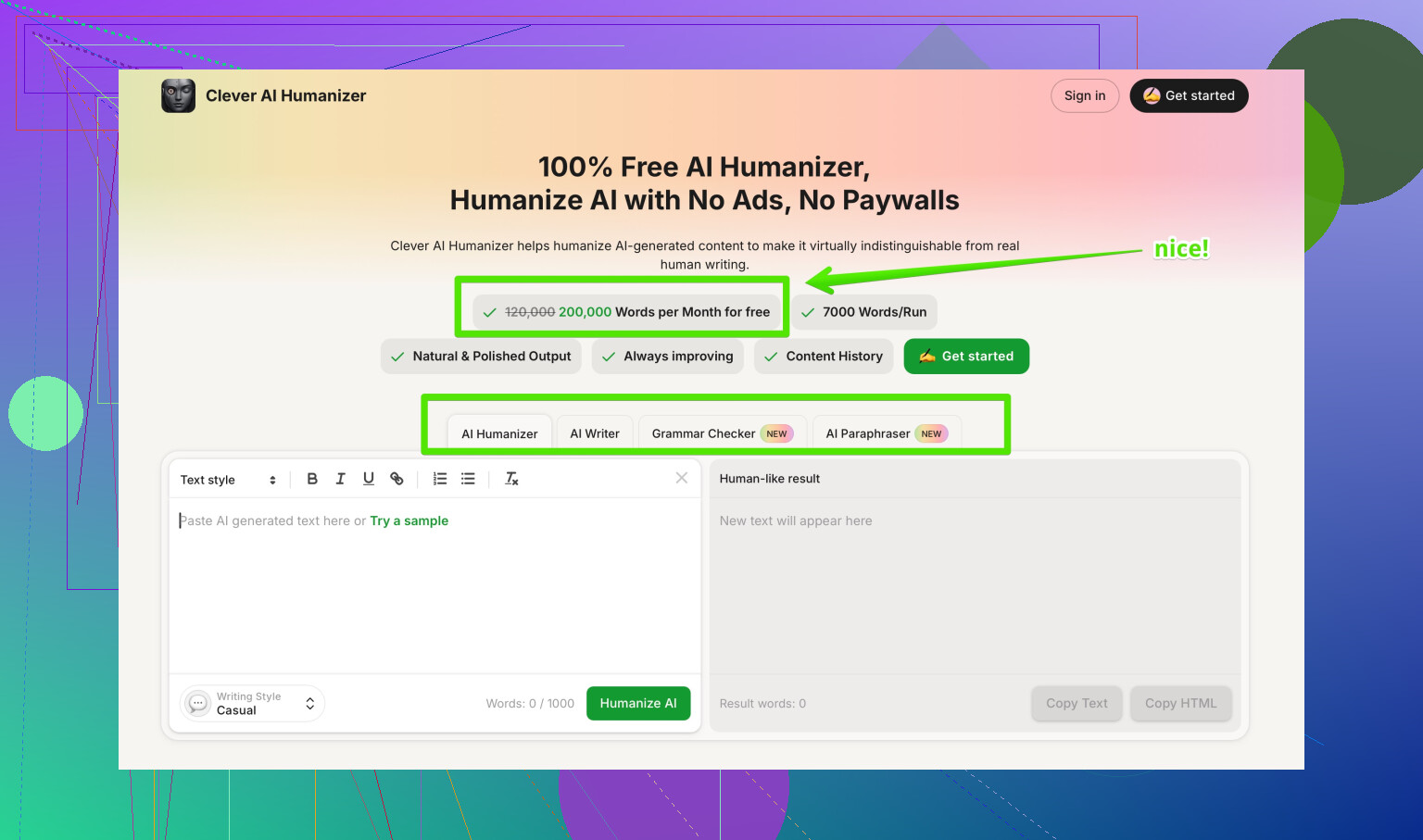

If you want something focused on more natural rewrites and clearer outputs, I had better luck with Clever AI Humanizer for some workloads. It tends to keep structure cleaner and reduces the weird “fake bloggy” tone.

If you care about SEO and human readability together, it helps to run content through a tool that focuses on natural language patterns and context, not only “detector evasion”. A tool like making AI text sound more natural and human for readers and detectors is useful when you want cleaner flow and simpler editing after.

My current rule

• For low‑risk, generic blog content, I still use Undetectable AI sometimes, with edits and cross‑checks.

• For important client content, anything with brand risk or legal risk, I use AI only as an assistant and do manual rewriting and style control.

If your main goal is to protect your relationship with clients, focus less on “passing AI detectors” and more on

• Consistent voice

• No hallucinations

• Clear ownership of the process in your contracts

Detectors are moving targets. Your reputation is not.

I’ve run Undetectable AI for client work too, and my experience lines up partly with @mikeappsreviewer and @viaggiatoresolare, but I’d be a bit harsher on the “reliability” side.

At scale, my take:

- It absolutely can drop scores on popular detectors (Originality, GPTZero, ZeroGPT, etc.), but it is not stable over time or across full articles. A piece that passes nicely today can get re-flagged when detectors update. So I would not treat it as any kind of long-term “shield.”

- Longer posts are where it starts to fall apart. On 2k–3k word articles, I kept seeing chunks that still looked statistically “LLM-ish,” and some detectors nailed those parts anyway.

- Quality control is the real cost. The weird pseudo-personal tone others mentioned kept creeping in, plus I had cases where factual / technical sentences got softened or slightly altered. That is the part that can quietly burn you with clients, not the detectors themselves.

Where I slightly disagree with the other reviews: I don’t think the “fix it in post” editing pass is always trivial. On technical or compliance-heavy content, you can spend almost as much time hand-correcting the humanized draft as you would just rewriting from a clean AI draft yourself. At that point, the value of Undetectable AI becomes questionable unless your client literally judges you only on detector screenshots.

If you do keep using it:

- Never promise “AI free” or “100% human” in a contract. Promise “accurate, well written content.”

- Keep a local copy of the original and the humanized version and be ready to merge the best parts.

- Avoid sending anything sensitive through it, especially client-internal docs.

Competitor-wise, Clever AI Humanizer is actually worth testing side by side if you care about natural style and less clean-up. It tends to produce more readable text out of the gate and feels more like a clarity-focused editor than a pure detector-dodger. For day-to-day client work, that balance of human readability plus lower detector scores is usually more important than chasing a 5–10 percent bump on some AI score bar.

If you are trying to figure out which tools are actually working in the current detection climate, there is a pretty solid breakdown of top options here:

best AI humanizer tools people actually use

Bottom line: Undetectable AI is “useful but volatile.” Treat it as a noisy filter, not as safety gear for your client relationships.

Quick analytical take, since others already covered workflows in detail.

Where I agree with @viaggiatoresolare / @sternenwanderer / @mikeappsreviewer:

- Undetectable AI can significantly lower scores on common detectors.

- Longer content gets unstable, especially once detectors update.

- You are still on the hook for real editing and factual checks.

- “Guaranteed human” is a contractual landmine.

Where I slightly disagree:

- I would not treat detector scores as the primary KPI at all. In practice, clients that scream “AI free” mostly calm down if:

- The text matches their voice

- There are no obvious hallucinations

- Their own tool screenshot looks “good enough,” not perfect

Chasing 0–5 percent AI often leads to overprocessed, mushy prose that readers (and good editors) can spot as artificial, even if detectors miss it.

How I’d frame Undetectable AI in a real client setup:

Pros

- Handy as a quick style reshaper for generic blog posts.

- Useful when a client has already committed to one specific detector and you can test against that.

- Faster than manually rewriting everything from scratch if the topic is low risk.

Cons

- Volatile over time. Old content can start failing after detector updates.

- Meaning drift on nuanced topics like legal, medical, or security.

- Noticeable “LLM flavor” on long pieces, which human reviewers can call out even if tools do not.

- Privacy / ToS angle is nontrivial if you work with sensitive docs.

On the “is it safe at scale” question:

- Operationally “safe” only if:

- Content is generic and non-sensitive.

- You retain full editorial control and explicitly budget time for revision.

- Your contract says “we may use AI” and does not promise AI-free creation.

- Not “safe” if:

- The client legally forbids AI rewriting.

- The brand has high reputational or regulatory exposure.

- You are relying on it as a one-click shield against any future detector.

Where Clever AI Humanizer fits in:

Used side by side, my impression is:

Clever AI Humanizer – pros

- Tends to preserve structure better, so you do not end up with odd transitions all over.

- Outputs feel less like a forced “personal blog” voice and more like a clarity edit.

- Editing time after the fact is usually lower, since it aims at readability first, detection second.

Clever AI Humanizer – cons

- Still not “fire and forget.” You must verify facts and tone.

- On very strict brand voice guides, it can still flatten nuance, so style tweaks are needed.

- If you are purely obsessed with squeezing the lowest possible detector score, Undetectable AI can sometimes edge it out on that single metric.

If you want a practical division of labor:

-

Use your base LLM (GPT, Claude, etc.) for:

- Outline

- Fact gathering (then manually verified)

- Initial draft with explicit brand voice prompts

-

Then:

- For clients who mainly care about readability + plausible human feel: run a pass through Clever AI Humanizer, then do a focused human edit.

- For clients who explicitly wave detector screenshots at you: test a short chunk in Undetectable AI, see how their detector reacts, and decide if it is worth the extra cleanup versus just tightening your own prompts and manual edits.

Final sanity checks I would do on anything “humanized,” regardless of tool:

- Read aloud: if it sounds like a generic blog article from nowhere, rewrite key sections.

- Paragraph-by-paragraph fact check on technical topics.

- Search within the doc for repeated phrases and remove the obvious patterns.

- Keep a change log or at least both versions archived, in case a client questions authenticity later.

Tools like Undetectable AI and Clever AI Humanizer are useful, but your real moat with clients is:

- Transparent process

- Strong voice control

- Willingness to edit ruthlessly when the tool output is “detector-safe” but human-weird.