I’ve been testing GPTinf Humanizer to make AI-written text sound more natural, but I’m not sure if it’s actually working or if it’s hurting content quality and SEO. Has anyone here used it at scale for blog posts or client work and can share real results, pros, cons, and any issues with AI detectors or rankings?

GPTinf Humanizer Review From Someone Who Tried To Break It

I ran GPTinf through the same test set I use on every “AI humanizer” I touch. Short tech explainers, longer blog style text, and a couple of messy Reddit style paragraphs.

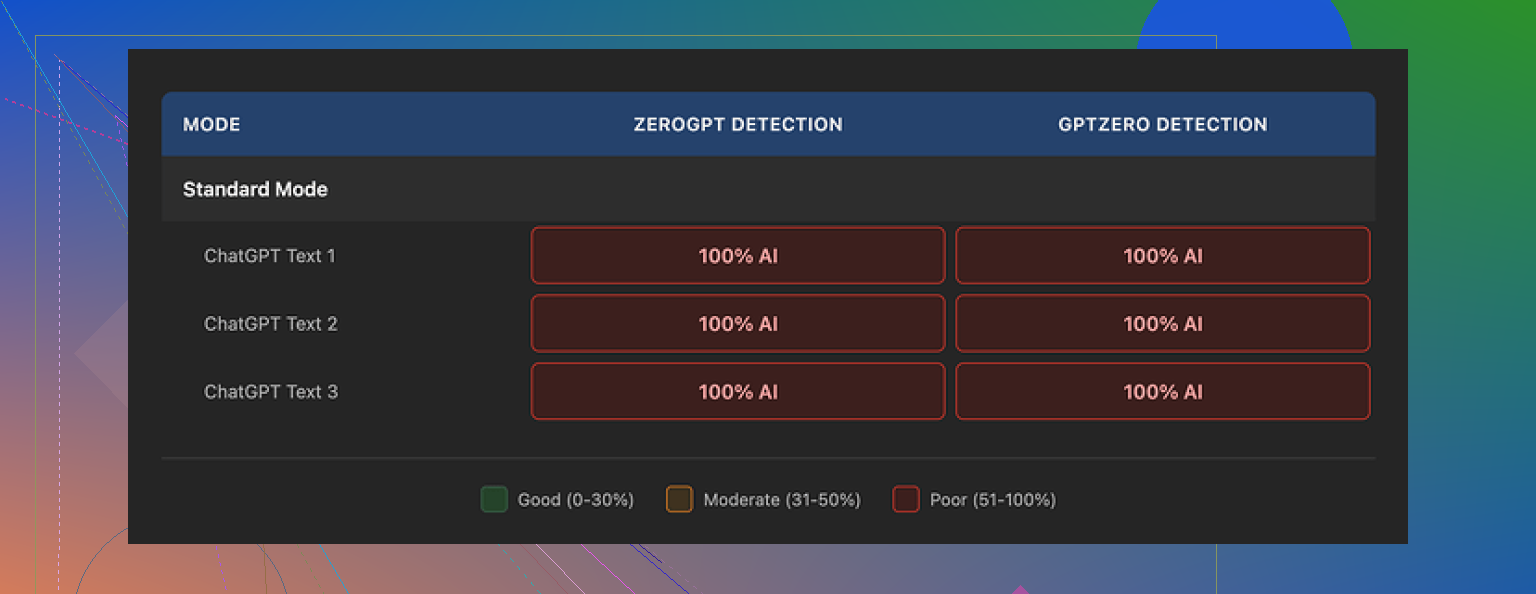

The homepage shouts “99% Success rate.” My results were the opposite.

I fed the outputs into GPTZero and ZeroGPT, both on default settings. Every single GPTinf output, no matter which mode or variation I picked, came back as 100% AI generated on both detectors. Zero exceptions. So on my side the score was 0% success.

On the upside, the writing it spits out does not look awful. I would rate it around 7 out of 10 for clarity and flow. It reads like a decent ChatGPT answer from a day when you are not pushing it too hard. Also, rare thing I noticed, it strips em dashes from the output by default. So if you hate those, small win.

Thing is, that small win told me something. If it handles surface level stuff like punctuation, but detectors still flag everything, the deeper token patterns from LLMs stay intact. It feels like a light rewrite on top of raw AI text instead of a serious effort to break detector patterns.

When I tried a different tool side by side, this one did better:

Clever AI Humanizer scored higher and did not ask for money. More on that at the end.

How The Detectors Reacted

I used:

- GPTZero, web version, default threshold

- ZeroGPT, web version, default threshold

Workflow I used:

- Feed original AI text from ChatGPT into GPTinf.

- Copy GPTinf output.

- Paste into both detectors.

- Repeat across several content types.

Every GPTinf sample:

- GPTZero: “Likely AI generated” or “AI” with 100% score.

- ZeroGPT: flagged as AI with 100% or close to it.

No partial passes, no “mixed” signals. If your goal is to beat standard detectors, my tests did not show any real benefit.

Writing Quality And Style

Ignoring detectors for a second and looking at the text itself.

What I liked:

- Sentences look clean, not stuffed with weird synonyms.

- Grammar is fine.

- No obvious broken English moments.

What bothered me:

- You still see common LLM habits, like repeated phrase structures.

- It keeps that “polite help article” tone many models default to.

- Ideas do not shift much. It is more like rephrasing than real rewriting.

So if you need a clean paraphrase, it works alright. If you need something that feels like a human with opinions, it does not get you there.

Limits, Pricing, And The Gmail Shuffle

The free tier felt cramped.

- Without an account: about 120 words per run.

- With a free account: about 240 words.

When I was trying to run a bigger batch of test paragraphs, I hit the wall fast. To continue for free, you would have to juggle several Gmail accounts. I made a second one for testing, got tired at the third.

Paid plans I saw:

- Lite plan on annual billing: 3.99 dollars per month for 5,000 words.

- Top plan: 23.99 dollars per month for “unlimited” words.

On paper, the pricing is not wild compared to some other tools in this niche. The problem is value. With my tests failing on detection, paying did not make sense.

Privacy And Who Runs It

I always read privacy policies on these tools, because you are feeding them your raw text. Sometimes client docs. Sometimes email drafts.

Points that stood out:

- The policy gives the operator broad rights over submitted content.

- No clear statement about retention time after processing.

- No detail about whether data is used to train models.

You might not care if you are rewriting a school essay. If you work with client data, this matters more.

Service details:

- Run by a single owner in Ukraine.

- No external data processors listed in detail when I checked.

If data jurisdiction or storage location is important for your work, this is something you should know before sending in anything sensitive.

A Quick Comparison With Clever AI Humanizer

Same test set, same detectors, same process.

Tool: Clever AI Humanizer

Link: GPTinf Humanizer Review with AI-Detection Proof - AI Humanizer Reviews - Best AI Humanizer Reviews

My experience with Clever:

- It passed detectors on more samples.

- Outputs sounded a bit more like mixed human writing, less “textbook AI.”

- Access stayed free for the runs I did.

So in a direct shootout, Clever AI Humanizer did better both on detection and cost for me.

Who GPTinf Might Still Fit

Even after all that, I can see a few use cases.

You might still use GPTinf if:

- You want quick light paraphrasing for emails or short posts.

- You care about removing em dashes and slightly cleaning style.

- You are not relying on passing AI detectors.

You probably avoid it if:

- Your top priority is staying under the radar of GPTZero or ZeroGPT.

- You need clear retention and data usage terms.

- You do not want another subscription that gives weak results.

For my own work, after running these tests, I stopped using GPTinf and kept Clever AI Humanizer in my toolkit instead.

I’ve run GPTinf at small scale for client blogs and I would not push it for “real” publishing work.

Short version for you, focused on content quality and SEO.

- Detection and “humanizing”

My results were similar to what @mikeappsreviewer saw, but not as extreme. On my side

- Some outputs still got flagged as AI

- Some came back “mixed”

- Almost none passed as “likely human”

So if your main goal is to fool detectors for agencies or picky clients, GPTinf feels unreliable.

Also, it behaves like a light paraphraser. It keeps the same structure, same order of ideas, same tone. Detectors look at deeper token patterns, so small wording tweaks do not help much.

- Impact on content quality

This is where I see the real problem for SEO.

When I ran GPTinf over solid drafts from ChatGPT or Claude, I saw:

- Loss of specific phrases that matched user intent

- Weaker headings with less keyword alignment

- More generic sentences, fewer examples and details

It made the writing “smoother” but also flatter. For SEO, you want:

- Clear topical focus

- Strong entities and terms your audience searches for

- Distinctive angles, not generic rewrites

GPTinf tends to smooth out the personality and the hooks. That hurts dwell time and engagement, which Google uses as indirect signals.

- Scale issues

For scale work:

- Word limits and pricing become annoying fast

- No batch or API workflow that fits a content pipeline

- Extra step in your process with no clear upside

When we tried running dozens of posts per week, GPTinf slowed the team and did not improve outcomes. Editors spent more time fixing “bland” sections.

- What worked better for SEO

What moved the needle for us:

- Use an LLM to draft, then have a human editor rewrite intros, transitions, and conclusions

- Add real data, quotes, small case examples, internal references

- Vary sentence length and structure manually

- Change order of arguments so it feels like human reasoning, not linear AI output

As a test, we ran some articles through Clever AI Humanizer instead of GPTinf. It did a better job at:

- Breaking up predictable AI phrasing

- Varying structure from the original

- Keeping key phrases intact more often

You still need a human pass, but Clever AI Humanizer fit better into an SEO workflow because it felt less like a light surface rewrite.

- Practical suggestion for you

If you care about SEO and client safety:

- Do not depend on GPTinf as your main “humanizer”

- Use it only for quick paraphrasing of short chunks, not full posts

- For blog posts, keep the base AI draft, then either

- run it through something like Clever AI Humanizer as a first pass, then

- have a human editor reshape sections for intent, examples, and personality

If your current GPTinf output looks smooth but you see falling engagement or clients start asking about “AI content,” I’d phase it out of the core pipeline and treat it as a small helper, not the main step.

Short version: if your main concern is “does GPTinf help my SEO and client content,” my answer is: not really, and sometimes it quietly makes things worse.

I had a similar journey to what @mikeappsreviewer and @ombrasilente described, but I came at it from the content-side, not the detector-side.

Here’s what I actually saw in production:

- Content “feel” vs client expectations

When we pushed GPTinf on a batch of blog posts, clients kept saying stuff like:

- “This feels generic”

- “It sounds like everything else on the web”

- “Where’s the personality we talked about in the brief?”

So yeah, it does smooth the text, but it also sands off a lot of edge. Hooks got weaker, intros blended together, and the “voice” we’d worked to set up in the outline just kinda evaporated.

- SEO-specific issues

This is where I disagree slightly with the idea that it’s just a harmless paraphraser.

In practice it tended to:

- Dilute exact-match or near-match phrases that were mapped to search intent

- Turn sharp, specific lines into mushy generalities

- Break subtle internal keyword relationships we’d baked into headings and subheadings

The result was content that “read fine” but did not line up as well with the original keyword map. Over a few weeks we saw:

- Lower click through for some titles that had been rewritten downstream

- Slightly worse time on page on the GPTinf touched articles vs untouched or manually edited ones

Nothing catastrophic, but enough to make me pull it out of the main workflow.

- Detector paranoia vs actual risk

I agree with both of them that GPTinf is not a magic bullet for GPTZero or ZeroGPT. But also, I think people overfixate on “passing” detectors. Most of our agency problems were not “this failed a detector,” they were:

- Editors could smell AI tone

- Brand managers flagged stiff or repetitive language

- Subject matter experts wanted more nuance, not more paraphrasing

So if you are using GPTinf mainly to ease your anxiety about detection, you are solving the wrong problem. The issue is quality and distinctiveness, not just “is this flagged as AI.”

- Scale and workflow

At real scale, GPTinf became a bottleneck for us:

- No efficient batch handling for 2k to 3k word posts

- Word caps that made longer pieces annoying to process

- Extra step with no measurable uplift in rankings or approval rates

The editors ended up spending more time re injecting detail and tone than they would have spent just editing the original AI draft plus adding their own examples.

- What worked better in practice

What actually helped for SEO and clients was:

- Use the LLM to get a solid structured draft

- Have a human go in and add real source references, personal experience, niche jargon and specific numbers

- Reshuffle sections so the flow matches how a human expert would explain it

- Only use “humanizers” as a surgical tool on specific stiff paragraphs, not on whole posts

On that note, Clever AI Humanizer did end up in the toolkit. Not as a “save me from detectors” silver bullet, but as a quick fix when a paragraph felt too obviously AI style. It tended to keep key terms intact more often and shake up the structure just enough to give editors a better starting point.

- What I’d do in your shoes

If you are worried GPTinf is hurting content quality and SEO:

- Take two or three of your existing posts

- Keep the original AI plus human edited version live on one URL

- On a different but similar keyword, use GPTinf in your normal way

- Watch engagement, scroll depth, and feedback from clients or readers over a month

My bet: the GPTinf version will look “clean” but underperform on both engagement and brand feel. If that happens, I would demote GPTinf to a minor role and put more time into human editing and a lighter tool like Clever AI Humanizer only where really needed.

TLDR: GPTinf is ok as a glorified paraphraser for short stuff. For serious blog or client work where SEO and brand voice matter, it adds risk and friction without giving you much in return.

Short version: GPTinf is fine as a paraphraser, but if you care about SEO and long term content quality, I would move it out of the core pipeline.

Everyone above nailed the detection part, so I will not rehash that. The one place I slightly disagree with @ombrasilente and @mike34 is this: I do not think any “humanizer” should be judged mainly on detector scores anymore. Detectors are noisy, vendors tweak thresholds all the time, and Google has been very careful not to say “AI text = bad.” What actually gets punished is low utility and sameness.

Where GPTinf worries me most is pattern sameness:

- Topic development stays linearly aligned with the original AI draft

- Paragraph logic feels “LLM tidy” rather than human messy

- Risk of building a whole site with very similar rhetorical rhythm

At scale that can create what looks like a content farm pattern, even if each page is “clean.” That is a more realistic SEO risk than a school style detector.

On the flip side, I would push back slightly on the idea that tools like GPTinf always kill personality. If you feed it an already bland draft, you get even blander. If you start from a clearly opinionated outline and keep your own examples and data untouched, the harm is smaller. The issue is that most people run entire posts through it and expect magic.

On Clever AI Humanizer specifically:

Pros

- Better at shaking up structure instead of just swapping synonyms

- Tends to preserve key phrases and entities more than GPTinf, which is crucial for search intent

- Useful as a “spot fixer” for obviously AI sounding chunks rather than whole posts

- Works reasonably well in an editor’s toolkit alongside manual tuning

Cons

- Still not a replacement for a human edit if you care about unique voice

- Can occasionally over loosen phrasing so your tight keyword framing drifts

- If you rely on it heavily, different posts can start to share the same “humanized” flavor

- Does not solve brand tone or subject matter depth on its own

Compared with how @mikeappsreviewer evaluated it, I would say: detectors are a nice sanity check, but I care more about how a Clever AI Humanizer pass affects scroll, internal link clicks, and whether a human reviewer can still “smell” template AI. On those softer metrics it tends to perform better than GPTinf, but still needs human judgment on where to stop.

Practical tweak that is different from what others suggested:

- Freeze your SEO spine first

- Lock in target queries, semantic variants, entities, and internal link anchors in a separate doc

- Treat that as “do not break” scaffolding

- Let your LLM draft freely around that spine

- Only run problem paragraphs through Clever AI Humanizer, not title, H1, or key subheads

- Final human pass checks three things only

- Does it say anything non trivial

- Does it sound like this specific brand

- Is the search intent obvious in the first 150 words

In that setup GPTinf barely has a role. At best it is a quick paraphraser for emails or tiny blocks of copy. For blog posts and client assets where SEO and brand matter, the combination of a good base model, a locked keyword spine, selective use of something like Clever AI Humanizer, and a short, focused human edit is safer than dumping everything through GPTinf and hoping detectors or algorithms are happier.