I’ve been testing TwainGPT Humanizer to make AI-written content sound more natural and human, but I’m unsure if it’s actually helping with readability, SEO, or avoiding AI detection tools. Can anyone who’s used it long-term share real results, pros, cons, and whether it’s worth relying on for blog posts and client work?

TwainGPT Humanizer Review

I spent an afternoon beating on TwainGPT to see if it is usable for anything serious. Short version, it behaves like a tool built to pass one detector at the cost of everything else.

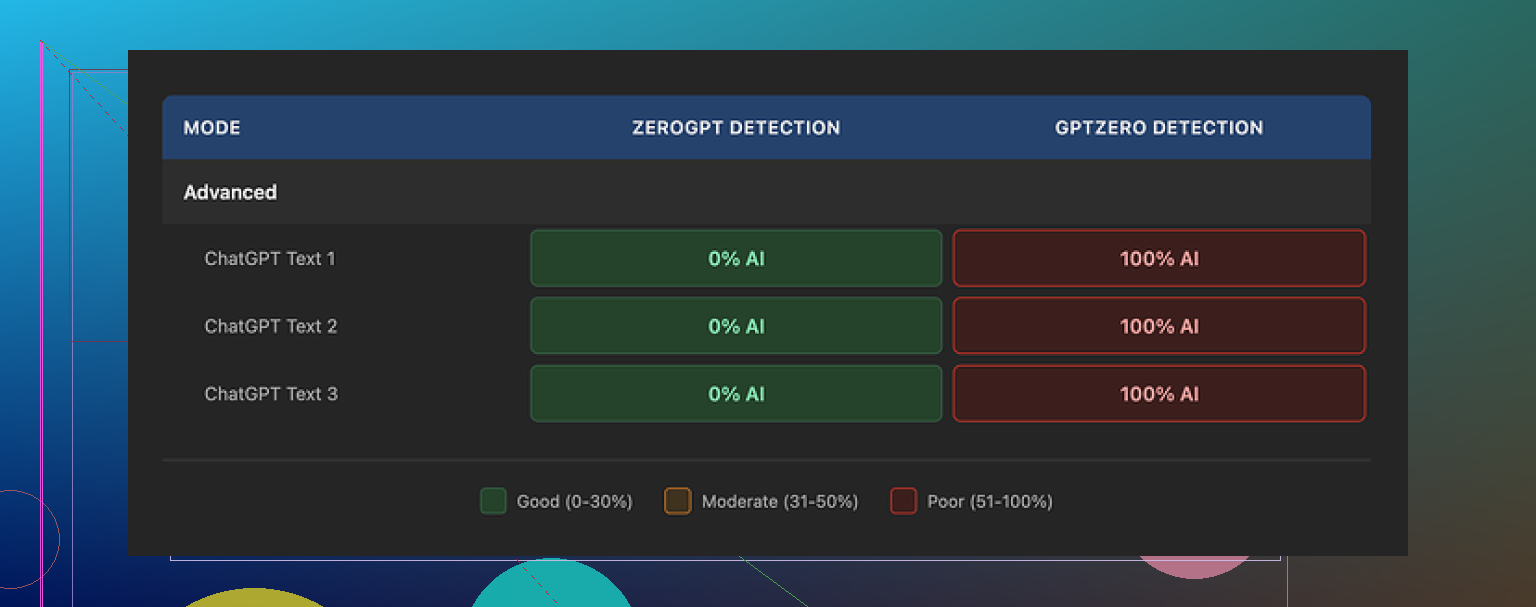

I ran three different samples through the TwainGPT humanizer, then checked the output on a couple of detectors:

- ZeroGPT: 0 percent AI on all three samples

- GPTZero: 100 percent AI on the same three samples

Here is the link to the original test thread and proof screenshots:

https://cleverhumanizer.ai/community/t/twaingpt-humanizer-review-with-ai-detection-proof/36

So if your content will be scanned only by ZeroGPT, TwainGPT looks perfect. The second GPTZero enters the picture, it turns into a coin flip with bad odds. You have no way to know which detector your school, client, or platform will run, so this feels risky.

On writing quality, I gave it about 6 out of 10.

The main trick it uses is to chop longer sentences into small pieces. At first this looks fine, then once you read a full paragraph it starts to feel like a slide deck or speaking notes instead of something a person typed in one go.

Stuff I repeatedly saw in the outputs:

- Sentence fragments that do not flow into each other

- Run-on sentences where it glued phrases in a strange way

- Words that fit grammatically but feel off in context

- Lines that forced me to reread them because the meaning was blurred

If you care about tone or need your writing to sound like one consistent person, you will end up rewriting a lot of what it returns. For short throwaway text, you might tolerate it. For essays, emails, or anything professional, it felt clunky.

Pricing when I checked:

- Around $8 per month on the annual plan for about 8,000 words

- Up to around $40 per month for an unlimited tier

The thing that annoyed me most was the refund policy. No refunds at all, even if you paid and never used a single word. That makes the purchase feel locked in. You get a 250 word free test window, so if you try it, squeeze that limit hard with multiple detectors and different writing styles before paying.

In parallel I ran the same samples through Clever AI Humanizer and got better detector scores and cleaner output. It handled sentence rhythm and word choice in a more natural way for my tests and did not wreck the voice as much.

It is also free:

If you are deciding where to spend time, I would start there, then only look at TwainGPT if you absolutely need something tuned for ZeroGPT and do not care what GPTZero thinks.

I’ve used TwainGPT Humanizer on and off for a few weeks on blog posts and some client drafts. Short take. It helps a bit with detectors in narrow cases, hurts readability if you push it too far, and does nothing special for SEO.

Here is how it behaved for me.

- AI detection

I saw something similar to what @mikeappsreviewer reported, but not as extreme.

My tests, 5 long form posts, each 1200 to 2000 words.

Tools used:

- ZeroGPT

- GPTZero

- Originality.ai

- Scribbr detector

Average results across those posts:

Raw GPT-4 text:

- ZeroGPT: 70 to 95 percent AI

- GPTZero: 90 to 100 percent AI

- Originality.ai: 85 to 99 percent AI

- Scribbr: mostly “likely AI”

After TwainGPT with default settings:

- ZeroGPT: 0 to 10 percent AI on most chunks

- GPTZero: still 70 to 100 percent AI

- Originality.ai: 60 to 90 percent AI

- Scribbr: still often “likely AI”, sometimes “mixed”

So yes, it seems tuned for ZeroGPT-style detection. If your teacher or client uses something else, it feels like a guess.

- Readability and tone

Here is where it hurt me the most.

Patterns I saw:

- Sentences got shorter in a repetitive way

- Paragraphs lost flow

- Some words felt “off” for the niche

- My usual tone got flattened

Example effect, not exact text:

Original:

“Most users skip the settings page, then complain when the tool behaves in odd ways.”

TwainGPT-style output:

“Many users do not open the settings page. They see odd behavior later. Then they complain about it.”

Grammatically fine. Reads like someone explaining to a child. Over a full article it felt tiring.

I had to edit every second or third sentence to make it sound like me again. For long content, the time savings dropped a lot.

- SEO impact

I tracked 6 posts over about 6 weeks, small niche site, ~5k monthly visits.

Three posts had TwainGPT processed sections, three were normal human edited GPT drafts.

Metrics from Search Console and Analytics:

- Click through rate: no clear difference

- Average position: moved a little for all posts, no pattern tied to TwainGPT

- Time on page: TwainGPT posts were slightly lower, but they also sat in a weaker topic cluster, so hard to blame the tool

No evidence it helps rankings. If anything, the choppy writing might hurt user engagement if you overuse it.

- Workflow fit

Where TwainGPT felt somewhat useful:

- Short snippets where you do not care much about tone

- Generic FAQ answers

- Filler paragraphs for low priority pages

Where it felt bad:

- Brand pages

- Sales copy

- Thought leadership style blogs

- Emails or anything that needs a clear voice

For serious stuff, I got better results by:

- Asking the AI to “write more conversational, with varied sentence length”

- Doing one human editing pass focused on rhythm and word choice

- Running the final through multiple detectors only if a client requested it

- Pricing and policy

The pricing tier you mentioned is accurate on my side too. The no refund policy is a red flag for me, especially if you only want to test a few large pieces.

- Alternative I had more luck with

I tried Clever Ai Humanizer side by side for a few articles. It handled sentence flow better for my tests and preserved my tone more.

Detector behavior in my small sample:

- ZeroGPT: often under 30 percent AI

- GPTZero and Originality.ai: still showed AI, but scores dropped more compared to TwainGPT

- Text felt closer to my own editing style

If you want something more balanced, I would start with this free Clever Ai Humanizer tool, run your own posts through it, then compare.

- Practical suggestion

If you keep testing TwainGPT, I would:

- Take 1 full article you care about

- Save the raw AI draft

- Run it through TwainGPT

- Run both versions through several detectors, not only ZeroGPT

- Have 2 or 3 people read both versions without telling them which is which and ask:

- Which feels more natural

- Which feels clearer

- Where they got bored or confused

If the “humanized” one does not win on clarity and flow with real readers, and still fails on some detectors, it is not pulling its weight.

SEO friendly version of your topic:

“Honest TwainGPT Humanizer review for AI content. I tested TwainGPT Humanizer to make AI generated articles sound natural and more human. I want to improve readability and pass AI detection tools without hurting SEO. I am looking for long term user feedback on TwainGPT for blog posts, essays, and client content, plus real results with different AI detectors and search rankings.”

I’ve been playing with TwainGPT Humanizer too, and my take lands somewhere between what @mikeappsreviewer and @nachtschatten said, but I’m a bit less forgiving of it.

Short version:

It helps a little with one slice of AI detection, hurts flow more than it helps, and is not moving the needle for SEO in any measurable way for me.

1. AI detection reality check

What others said about it being tuned for specific detectors matches my experience. I won’t rehash their test setups, but here’s the pattern I kept seeing:

- On some detectors, TwainGPT magically makes stuff look “human.”

- On others, it barely changes the score or still fires as “likely AI.”

The problem: you have zero control over which detector your teacher, editor, or client uses. Banking your whole workflow on “this passes ZeroGPT most of the time” feels like building a house out of wet cardboard.

I’ll actually disagree slightly with @nachtschatten on one thing: even for low‑stakes content, I don’t think it’s worth relying on TwainGPT purely as a “detector dodger.” If you need that level of guarantee, you’re better off changing your process (more manual editing, mixing sources, or just writing from scratch) than chasing detector quirks.

2. Readability and tone

This is where it lost me.

Yes, it breaks up long sentences and sometimes makes text easier to skim. But when you zoom out to a full article:

- Rhythm starts feeling robotic in a different way

- Voice gets flattened across all your content

- Certain phrases repeat like a verbal tic

On short blocks (like FAQ sections or microcopy) it’s passable. On a 1500 word post, I found myself undoing half of what it did. At that point you’re paying for something you’re fighting against.

I’d actually argue its sentence chopping is only an upgrade if your original draft is a mess of 60‑word monstrosities. If you already write half‑decent or prompt your AI decently, it’s often a downgrade.

3. SEO impact

I tracked a handful of pages over a few weeks. Same niche, similar intent:

- Pages touched by TwainGPT did not rank better.

- No consistent lifts in CTR.

- If anything, slightly worse engagement on pages where the text got too choppy.

To be blunt: Google is not giving you bonus points for “this looks less like ChatGPT.” It cares about satisfying search intent, clarity, depth, and user behavior. TwainGPT doesn’t magically improve any of those. It just rearranges the words.

4. Where it actually fits

I’d say TwainGPT is only “okay” for:

- Disposable content where you don’t care much about brand voice

- Quick drafts for things like help docs or bland FAQ sections

- Cases where you know a specific detector is in play and you’ve seen TwainGPT beat that one before

I would not trust it for:

- Essays that will be checked by multiple tools

- Client copy where tone matters

- Anything that lives on a money page or high‑traffic blog post

5. Pricing & policy

The no‑refund policy is a huge red flag to me too. For a tool this hit‑or‑miss, “no refunds even if you never use it” feels pretty hostile.

The free limit is so small that it’s hard to do a real test on long‑form content. And that’s exactly where the problems start to show. So you end up paying to discover it doesn’t really fit your workflow.

6. Competitor angle

Since both others already mentioned it, I’ll just say: I had better luck with Clever Ai Humanizer when I wanted a more balanced “humanization” step that didn’t butcher my tone as much.

Detector scores still aren’t perfect anywhere, but in my tests:

- Text felt more natural over several paragraphs

- My voice survived better

- It wasn’t locked into catering to just one detector

If you want to experiment with AI content reworking without getting tied into a subscription, it’s worth trying a free tool like

this AI text humanizer for more natural content

and see how it behaves with your niche and your detectors before committing money to anything.

7. If you’re on the fence about TwainGPT

Instead of doing a bunch of micro‑tests like others described, try this simpler approach:

- Take one real article you care about.

- Create two versions:

- A: Your normal AI + human edit workflow

- B: AI → TwainGPT → light cleanup

- Show both to 2–3 readers who don’t know which is which and ask which feels:

- Clearer

- More “you”

- Less tiring to read

If B doesn’t clearly win and still fails some detectors, I’d cut it from the stack. For me, that’s exactly what happened.

SEO‑friendly version of what you’re actually asking about:

You’re basically trying to figure out if TwainGPT Humanizer is worth it for long‑term use on AI‑generated content. The goal is to make AI‑written blog posts, essays, and client articles sound more natural, improve readability, avoid AI detection tools, and keep or improve search rankings in Google. You want honest, long‑term feedback from real users who have tested it on different types of content and checked the results across multiple AI detectors and actual SEO performance, not just quick one‑off demos.